【IBM POWER8 评测 Part1:A Low Level Look at Little Endian】 Assessing IBM’s POWER8, Part 1: A Low Level Look at Little Endian

source:http://www.anandtech.com/show/10435/assessing-ibms-power8-part-1

自翻,转载请注明出处。

估计国内媒体不会有任何兴趣去评测POWER8吧。

A POWER8 FOR EVERYONE【平民的POWER8】

It is the widest superscalar processor on the market, one that can issue up to 10 instructions and sustain 8 per clock: IBM’s POWER8. IBM’s POWER CPUs have always captured the imagination of the hardware enthusiast; it is the Tyrannosaurus Rex, the M1 Abrams of the processor world. Still, despite a flood of benchmarks and reports, it is very hard to pinpoint how it compares to the best Intel CPUs in performance wise. We admit that our own first attempt did not fully demystify the POWER8 either, due to the fact that some immature LE Linux software components (OpenJDK, MySQL…) did not allow us to run our enterprise workloads.

【它是市面上拥有最宽发射的超标量处理器,拥有8分派10发射的规格:IBM POWER8。POWER系列一直是硬件发烧友想象中的霸王龙级别处理器。虽然已经有了大量测试,但现在还是很难将POWER系列和Intel的X86性能进行精确的对比。我们承认之前的首次尝试没有完全发挥出POWER8的性能,因为有些不成熟的小端Linux软件组件(OpenJDK,MySQL…)不允许我们运行企业级负载。】

Hence we’re undertaking another attempt to understand what the strengths and weaknesses are of Intel’s most potent challenger. And we have good reasons besides curiosity and geekiness: IBM has just recently launched the IBM S812LC, the most affordable IBM POWER based server ever. IBM advertises the S812LC with “Starting at $4,820”. That is pretty amazing if you consider that this is not some basic 1U server, but a high expandable 2U server with 32 (!) DIMM slots, 14 disk bays, 4 PCIe Gen 3 slots, and 2 redundant power supplies.

【因此我们现在将再次试图了解这个Intel最具潜力对手的长处和短处。除了出于好奇心和Geek本能,我们还有另外的好理由:IBM最近发布了IBM S812LC,史上最便宜的POWER服务器。起步价4820刀。【这差不多是Intel的一半价格】要知道这不是某些低端1U服务器,而是高拓展性的2U服务器。它带有32条内存插槽,4个PCIe3.0插槽,2个电源。】

Previous “scale out” models SL812 and SL822 were competitively priced too … until you start populating the memory slots! The required CDIMMs cost no less than 4(!) times more than RDIMMs, which makes those servers very unattractive for the price conscious buyers that need lots of memory. The S812LC does not have that problem: it makes use of cheap DDR3 RDIMMs. And when you consider that the actual street prices are about 20-25% lower, you know that IBM is in Dell territory. There is more: servers from Inventec, Inspur, and Supermicro are being developed, so even more affordable POWER8 servers are on the way. A POWER8 server is thus quite affordable now, and it looks like the trend is set.

【虽然之前的SL812和SL822价格也很不错…直到你看到了内存槽!要求的CDIMM内存价格是RDIMM的4倍以上,对于需要大量内存的客户它们就没有了吸引力。S812LC没有这种问题:它直接可以用便宜的DDR3 RDIMM。要知道Dell也做IBM服务器,所以实际价格要低20-25%。此外Inventec, Inspur, 和Supermicro的也在开发中,所以将来会有更便宜的POWER8服务器。因此POWER8现在已经很便宜了,而且还有变得更便宜的趋向。】

To that end, we decided that we want to more accurately measure how the POWER8 architecture compares to the latest Xeons. In this first article we are focusing on characterizing the microarchitecture and the “raw” integer performance. Although the POWER8 architecture has been around for 2 years now, we could not find any independent Little Endian benchmark data that allowed us to compare POWER8 processors with Intel’s Xeon processors in a broad range of applications.

【为此我们想要更加精确地对比POWER8和Intel最新的至强。这篇文章将会集中在微架构和原始整数性能上。虽然POWER8已经发布两年,我们还是不能找到任何能把POWER8和至强作对比的小端测试数据。】

Notice our emphasis on “Little Endian”. In our first review, we indeed tested on a relatively immature LE Ubuntu 14.04 for OpenPOWER. Some people felt that this was not fair as the POWER8 would do a lot better on top of a Big Endian operating system simply because of the software maturity. But the market says otherwise: if IBM does not want to be content with fighting Oracle in an ever shrinking high-end RISC market, they need to convince the hyper scalers and the thousands of smaller hosting companies. POWER8 Server will need to find a place inside their x86 dominated datacenters. A rich LE Linux software ecosystem is the key to open the door to those datacenters.

【注意我们强调了“小端”。在之前的测试,我们用了相对不成熟的OpenPOWER版小端Ubuntu14.04。一些人觉得这对POWER8不公平,因为POWER8在成熟的大端操作系统中能够发挥的更好。然而市场要求则相反:如果IBM不满足于和甲骨文在不断缩小的高端RISC市场竞争,他们就需要说服更多的小企业购买POWER。在X86统治下的数据中心市场中,POWER8需要找到一个落脚点。小端Linux软件的生态系统是打开市场的关键。】

When it comes to taking another crack at our testing, we found out that running Ubuntu 15.10 (16.04 was just out yet when we started testing) solved a lot of the issues (OpenJDK, MySQL) that made our previous attempt at testing the POWER8 so hard and incomplete. Therefore we felt that despite 2 years of benchmarking on POWER8, an independent LE Linux-focused article could still add value.

【我们发现运行Ubuntu15.10(16.04在测试时候才刚出来)时解决了之前的很多问题。因此我们觉得在两年的POWER8测试之后,一个独立的小端Linux测试依然有价值。】

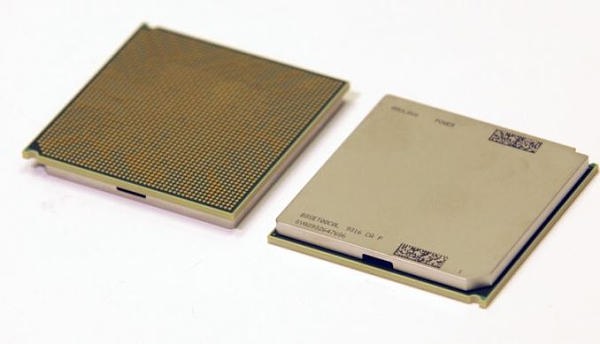

Inside the Beast(s)【猛兽之心】

When the POWER8 was first launched, the specs were mind boggling. The processor could decode up to 8 instructions, issue 8 instructions, and execute up to 10 and all this at clockspeed up to 4.5 GHz. The POWER8 is thus an 8-way superscalar out of order processor. Now consider that

【当POWER8发布时,它的规格让人难以置信。它每周期可以解码最多8个指令,发射8个指令,执行最多10个指令,并且全部核心最高可达4.5Ghz。因此POWER8是一个8-way超标量乱序执行处理器。】

- The complexity of an architecture generally scales quadratically with the number of “ways” (hardware parallelism)【随着way数量增加,架构的复杂性也以二次方形式增加】

- Intel’s most advanced architecture today – Skylake – is 5-way【Intel最先进的Skylake是5-way】

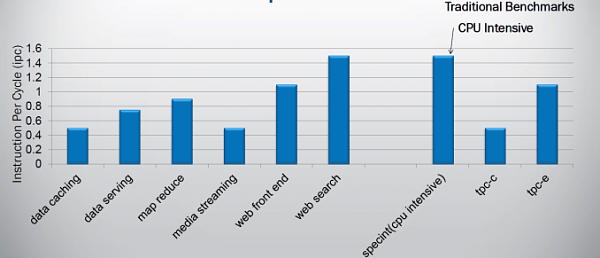

and you know this is a bold move. If you superficially look at what kind of parallelism can be found in software, it starts to look like a suicidal move. Indeed on average, most modern CPU compute on average 2 instructions per clockcycle when running spam filtering (perlbench), video encoding (h264.ref) and protein sequence analyses (hmmer). Those are the SPEC CPU2006 integer benchmarks with the highest Instruction Per Clockcycle (IPC) rate. Server workloads are much worse: IPC of 0.8 and less are not an exception.

【所以这是个很激进的做法。如果你只看软件并行性,这简直是自杀行为。实际上大多数现代CPU在运行垃圾邮件过滤(perlbench)、视频解码(h264.ref)和蛋白质序列分析(hmmer)式平均每周期执行2条指令。这些已经是SPEC CPU2006整数测试中IPC最高的了。服务器负载IPC差得多:正常情况低于0.8。】

It is clear that simply widening a design will not bring good results, so IBM chose to run up to 8 threads simultaneously on their core. But running lots of threads is not without risk: you can end up with a throughput processor which delivers very poor performance in a wide range of applications that need that single threaded speed from time to time.

【很明显更宽的设计不会带来好的结果,所以IBM选择了1个核心同时运行8条线程【SMT8】。但这样做也是有风险的:最终可能会在需要单线程性能的程序中得到很差的成绩。】

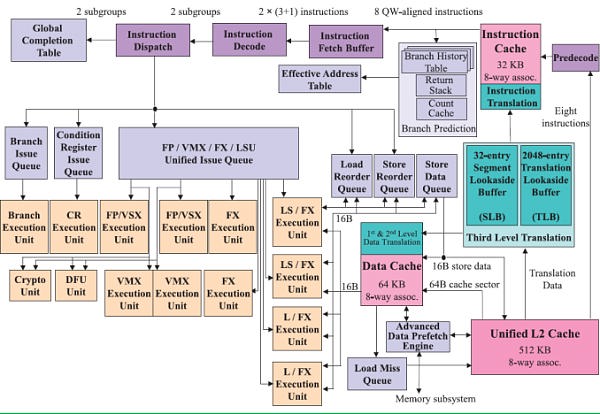

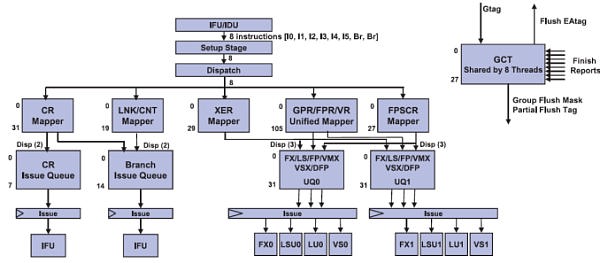

The picture below shows the wide superscalar architecture of the IBM POWER8. The image is taken from the white paper “IBM POWER8 processor core architecture”, written by B. Shinharoy and many others.

【下面是POWER8的超标量架构。来自于POWER8的白皮书。】

The POWER8+ will have very similar microarchitecture. Since it might have to face a Skylake based Xeon, we thought it would be interesting to compare the POWER8 with both Haswell/Broadwell as Skylake.

【POWER8+也会有非常相似的架构。它的对手可能是Skylake至强。】

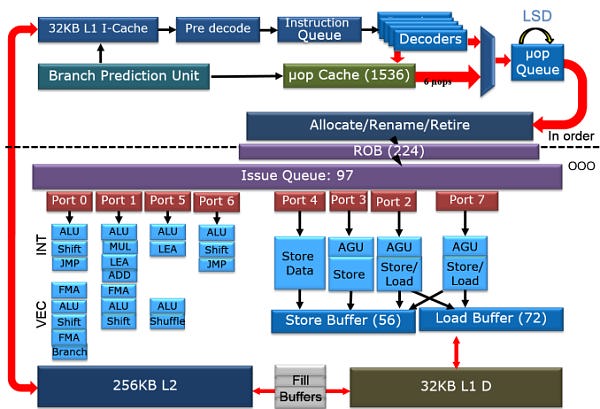

The second picture is a very simplified architecture plan that we adapted from an older Intel Powerpoint presentation about the Haswell architecture, to show the current Skylake architecture. The adaptations were based on the latest Intel optimization manuals. The Intel diagram is much simpler than the POWER8’s but that is simply because I was not as diligent as the people at IBM.

【下面是我们拿简化的Haswell架构ppt改了一下,来代表现在的Skylake。改动都是根据Intel的手册来的。我做的Intel的图比POWER8的简单多了,原因很简单,因为我没IBM的人那么勤奋。】

It is above our heads to compare the different branch prediction systems, but both Intel and IBM combine several different branch predictors to choose a branch. Both make use of a very large (16 K entries) global branch history table. Both processors scan 32 bytes in advance for branches. In case of IBM this is exactly 8 instructions. In case of Intel this is twice as much as it can fetch in one cycle (16 Bytes).

【比较这两者的分支预测系统难度太大,但Intel和IBM都结合了几个不同的分支预测器来选择分支。这两者都采用了非常大的全局分支历史表(16K entries),都在分支前预扫描32个字节。在POWER8上是刚好8条指令,在Intel上是一个周期抓取指令数(16字节)的两倍。】

On the POWER8, data is fetched from the L2-cache and then predecoded into the L1-cache. Predecoding includes adding branch, exception, and grouping. This makes sure that predecoding is out the way before the actual computing (“Von Neuman Cycle”) starts.

【POWER8上,数据先从L2中提取,然后预解码进L1。预解码包括增加分支、除外和聚合。这保证了预解码阶段位于实际计算(冯 诺依曼循环)开始之前。】

In Intel Haswell/Skylake, instructions are only predecoded after they are fetched. Predecoding performs macro-op fusion: fusing two x86 instructions together to save decode bandwidth. Intel’s Skylake has 5 decoders and up to 5 ?op instructions are sent down the pipelines. The current Xeon based upon Broadwell has 4 decoders and is limited to 4 instructions per clock. Those decoded instructions are sent into a ?-op cache, which can contain up to 1536 instructions (8-way), about 100 bits wide. The hitrate of the ?op cache is estimated at 80-90% and up to 6 ?ops can be dispatched in that case. So in some situations, Skylake can run 6 instructions in parallel but as far as we understand it cannot sustain it all the time. Haswell/Broadwell are limited to 4. The ?op cache can – most of the time – reduce the branch misprediction penalty from 19 to 14.

【在Haswell/Skylake上,指令只在提取后预解码。预解码中执行微指令融合:将两个X86指令融合起来,节省解码带宽。Skylake有5个解码器,最多5个微指令会送到后端管线中。当前的Broadwell至强有4个解码器,每周期只有四条指令。解码后的指令会被送进微指令缓存,该缓存可以容纳最多1536条指令(8-way),宽度大概100bit。微指令缓存的命中率大概在80-90%,这种情况下最多6条微指令能被分派。所以在某些情况下,Skylake可以并行执行6条指令,但并不能一直保持这个状态。而Haswell/Broadwell最多都只有4条。微指令缓存在大多数情况下可以将分支误预测开支从19减少到14个循环。】

Back to the POWER8. Eight instructions are sent to the IBM POWER8 fetch buffer, where up 128 instructions can be held for two thread(s). A single thread can only use half of that buffer (64 instructions). This method of allocation gives each of two threads as much resources as one (i.e. no sharing), which is one of the key design philosophies for the POWER8 architecture.

【回到POWER8。8条指令被送进提取缓存,在这里最多可以为两个线程保留128条指令。一个线程则只能用一半缓存(64条指令)。这种分配方式使得双线程和单线程能得到同样的资源,这是POWER8架构设计的关键原则之一。】

Just like in the x86 world, the decoding unit breaks down the more complex RISC instructions into simpler internal instructions. Just like any modern Intel CPU, the opposite is also possible: the POWER8 is capable of fusing some combinations of 2 adjacent instructions into one instruction. Saving internal bandwidth and eliminating branches is one of the way this kind of fusion increases performances.

【和X86一样,解码单元将复杂的RISC指令分拆为简单的内部指令。与IntelCPU一样,反过来做也是可以的:POWER8可以将两条相邻指令融合为一条。节省了内部带宽并排除分支,提升性能。】

Contrary to the Intel’s unified queue, the IBM POWER has 3 different issue queues: branch, condition register, and the “Load/Store/FP/Integer” queue. The first two can issue one instruction per clock, the latter can send off 8 instructions, for a combined total of 10 instructions per cycle. Intel’s Haswell-Skylake cores can issue 8 ?ops per cycle. So both the POWER8 and Intel CPU have more than ample issue and execution resources for single threaded code. More than one thread is needed to really make use of all those resources.

【与Intel的统一队列相反,POWER有三种不同的发射队列:分支、状态寄存、以及“Load/Store/浮点/整数“队列。前面两个每周期可以发射一条指令,第三种每周期可以发射8条,加起来每周期10条。Intel的Haswell-Skylake每周期发射8条微指令。所以对于单线程代码,这两者都有过多的发射和执行资源。如果想要完全利用这些资源,需要两个以上线程。】

Notice the difference in focus though. The Intel CPU has half of the load units (2), but each unit has twice the bandwidth (256 bit/cycle). The POWER8 has twice the amount of load units (4), but less bandwidth per unit (128 bit per cycle). Intel went for high AVX (HPC) performance, IBM’s focus was on feeding 2 to 8 server threads. Just like the Intel units, the LSUs have Address Generation Units (AGUs). But contrary to Intel, the LSUs are also capable of doing simple integer calculations. That kind of massive integer crunching power would be a total waste on the Intel chip, but it is necessary if you want to run 8 threads on one core.

【当然也要注意到不同点。Intel CPU有一半的(2个)load单元,但每个单元有两倍带宽(256bit/周期)。POWER8有两倍的load单元(4个),但每单元带宽更少(128bit/周期)。Intel追求高AVX(HPC)性能,IBM专注于运行2到8个服务器线程。与Intel一样,LSU都挂了AGU,但与Intel相反的是,POWER的LSU也能够做简单的整数计算。这种强大的整数计算能力如果在Intel芯片上的话就是浪费,但对于SMT8的POWER来说是必要的。】

Comparing with Intel’s Best【与Intel的顶级对决】

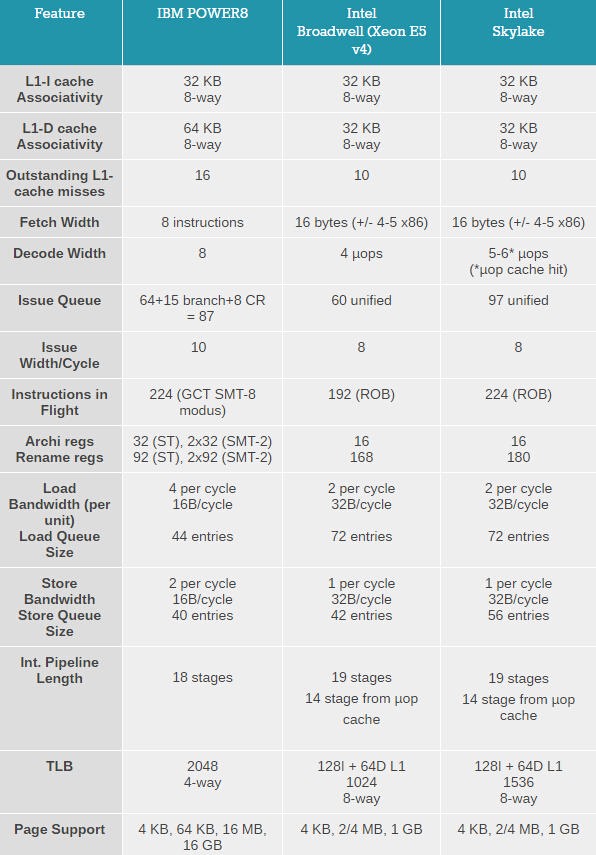

Comparing CPUs in tables is always a very risky game: those simple numbers hide a lot of nuances and trade-offs. But if we approach with caution, we can still extract quite a bit of information out of it.

【用表格比较CPU一直都是比较冒险的:这些简单的数字背后有很多细微差距和权衡。但如果我们小心谨慎,我们仍然能从中榨出很多信息。】

Both CPUs are very wide brawny Out of Order (OoO) designs, especially compared to the ARM server SoCs.

【这两者都是很宽的乱序执行设计,和ARM比起来要强大很多。】

Despite the lower decode and issue width, Intel has gone a little bit further to optimize single threaded performance than IBM. Notice that the IBM has no loop stream detector nor ?op cache to reduce branch misprediction. Furthermore the load buffers of the Intel microarchitecture are deeper and the total number of instructions in flight for one thread is higher. The TLB architecture of the IBM POWER8 has more entries while Intel favors speedy address translations by offering a small level one TLB and a L2 TLB. Such a small TLB is less effective if many threads are working on huge amounts of data, but it favors a single thread that needs fast virtual to physical address translation.

【虽然解码和发射宽度略低,Intel比IBM更加追求单线程性能。要注意IBM既没有用循环流检测器,也没有用微指令缓存来减少分支误预测。此外Intel的load缓存要更深,并且单线程缓存的指令数要更多。POWER8的TLB有更多的entries,而Intel更倾向通过小容量L1和L2 TLB的快速地址转换。这种小TLB在多线程大数据量下效果一般,但在单线程下表现很好。】

On the flip side of the coin, IBM has done its homework to make sure that 2-4 threads can really boost the performance of the chip, while Intel’s choices may still lead to relatively small SMT related performance gains in quite a few applications. For example, the instruction TLB, ?op cache (Decode Stream Buffer) and instruction issue queues are divided in 2 when 2 threads are active. This will reduced the hit rate in the micro-op cache, and the 16 byte fetch looks a little bit on the small side. Let us see what IBM did to make sure a second thread can result in a more significant performance boost.

Multi Threading Prowess【强大的多线程】

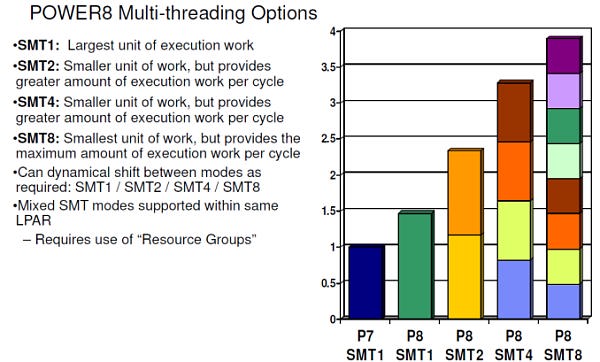

The gains of 2-way SMT (Hyperthreading) on Intel processors are still relatively small (10-20%) in many applications. The reason is that threads have to share most of the critical resources such as L1-cache, the instruction TLB, ?op cache, and instruction queue. That IBM uses 8-way SMT and still claims to get significant performance gains piqued our interest. Is this just benchmarketing at best or did they actually find a way to make 8-way SMT work?

【在很多应用程序中,Intel处理器的超线程(2路SMT)性能提升依然很小(10-20%)。原因是线程之间必须共享大多数最重要的资源,例如L1缓存、指令TLB、微指令缓存以及指令队列。然而IBM用了8路SMT却仍然声称能获得巨大的性能提升,这点让我们很好奇。这究竟是标杆营销呢,抑或说他们真正的找到了高效8路SMT的方法?】

It is interesting to note that with 2-way SMT, a single thread is still running at about 80% of its performance without SMT. IBM claims no less than a 60% performance increase due to 2-way SMT, far beyond what Intel has ever claimed (30%). This can not be simply explained by the higher amount of issue slots or decoding capabilities.

【需要注意的是2路SMT。不开SMT时单线程性能只发挥了80%。IBM声称开启2路SMT能带来至少60%的性能增长,比Intel说的30%要多得多。这不能简单地用更多的发射数或者更强解码能力来解释。】

The real reason is a series of trade-offs and extra resource investments that IBM made. For example, the fetch buffer contains 64 instructions in ST mode, but twice as many entries are available in 2-way SMT mode, ensuring each thread still has a 64 instruction buffer. In SMT4 mode, the size of the fetch buffer for each thread is divided in 2 (32 instructions), and only in SMT8 mode things get a bit cramped as the buffer is divided by 4.

【真实原因是IBM做出的一系列权衡,以及增加的额外资源。比如单线程64条的提取缓存,在2路SMT下就会变成双倍,保证每个线程依然能够有64条指令缓存。在SMT4模式下,每线程的提取缓存要除以二(32条),只有在SMT8模式下才会除以4,变成16条。】

The design philosophy of making sure that 2 threads do not hinder each other can be found further down the pipeline. The Unified Issue Queue (UniQueue) consists of two symmetric halves (UQ0 and UQ1), each with 32 entries for instructions to be issued.

【保证两个线程之间互不阻碍的这种设计哲学,更可以在后端管线中找到。统一发射队列保证两个线程(UQ0 UQ1)每个都有32entries。】

Each of these UQs can issue instructions to their own reserved Load/Store, Integer (FX), Load, and Vector units. A single thread can use both queues, but this setup is less flexible (and thus less performant) than a single issue queue. However, once you run 2 threads on top of a core (SMT-2), the back-end acts like it consists of two full-blown 5-way superscalar cores, each with their own set of physical registers. This means that one thread cannot strangle the other by using or blocking some of the resources. That is the reason why IBM can claim that two threads will perform so much better than one.

【每个UQ都可以发射指令到各自保留的Load/Store、整数(FX)、Load和向量单元中。单线程可以使用两个UQ,但这种方式比单UQ更缺乏灵活性。然而,如果你在一个核心上运行两个线程(SMT2),后端实际上就像两个完整的5-way超标量核心一样,每个都有自己的一套物理寄存器。这意味着线程之间不会因为共享资源而相互阻塞。这就是IBM为什么说SMT2带来的提升会这么大。】

It is somewhat similar to the “shared front-end, dual-core back-end” that we have seen in Bulldozer, but with (much) more finesse. For example, the data cache is not divided. The large and fast 64 KB D-cache is available for all threads and has 4 read ports. So two threads will be able to perform two loads at the same time. Another example is that a single thread is not limited to one half, but can actually use both, something that was not possible with Bulldozer.

【这和我们在推土机中见到的“共享前端,双核后端”比较类似,但设计上要巧妙的多得多。例如,POWER的指令缓存没有一分为二。【这里是Bulldozer曾经的败笔,后期推土机架构就增强了指令缓存关联性】大容量高速64K数据缓存则是所有线程共享,它有着4个读取端口。因此两个线程可以同时进行load操作。另外单线程也没有被限制成只能使用一半,而是能使用全部缓存,这一点在推土机上是不可能的。】【更多推土机架构细节链接】

Dividing those ample resources in two again (SMT-4) should not pose a problem. All resources are there to run most server applications fast and one of the two threads will regularly pause when a cache miss or other stalls occur. The SMT-8 mode can sometimes be a step too far for some applications, as 4 threads are now dividing up the resources of each issue queue. There are more signs that SMT-8 is rather cramped: instruction prefetching is disabled in SMT-8 modus for bandwidth reasons. So we suspect that SMT-8 is only good for very low IPC, “throughput is everything” server applications. In most applications, SMT-8 might increase the latency of individual threads, while offering only a small increase in throughput performance. But the flexibility is enormous: the POWER8 can work with two heavy threads but can also transform itself into a lightweight thread machine gun.

【如果再把这些充裕资源一分二(SMT4),应该不会产生什么问题。所有资源都用来高速运行服务器应用,并且当缓存未命中或者其他阻塞产生,两个线程中的一个线程会暂停。SMT8模式对于大多数应用优化还不好,而且SMT4模式就已经充分运用了所有资源。SMT8模式下的限制还有更多:由于带宽不足,SMT8模式下的指令预取被关闭。所以我们估计SMT8只对低IPC大吞吐量应用有好效果。在大多数应用中,SMT8可能会增加每个线程的延迟,同时只带来很小的性能提升。但灵活性是超强的:POWER8可以同时处理两个高负载线程,也能变身为低负载多线程的”机关枪“。】

System Specs【系统规格】

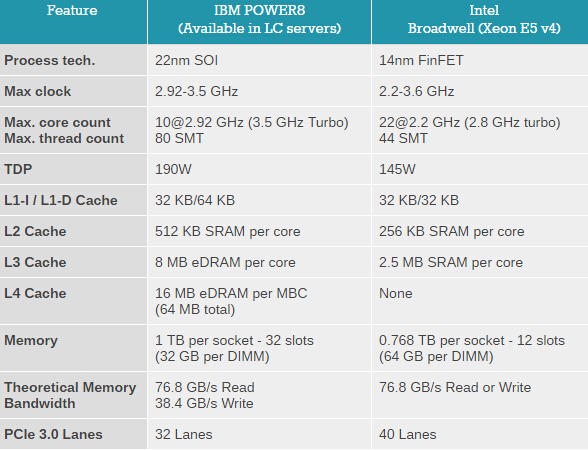

Lastly, let’s take a look at some high level specs. It is interesting to note that the IBM POWER8 inside our S812LC server is a 10-core Single Chip Module. In other words it is a single 10-core die, unlike the 10-core chip in our S822L server which was made of two 5-core dies. That should improve performance for applications that use many cores and need to synchronize, as the latency of hopping from one chip to another is tangible.

【我们用的S812LC是10核单芯片模块。意思是说它是单芯片10核,而不是S822L中两个5核芯片的胶水。这可以提升多核同步性能。】

The SKU inside the S812LC is available to third parties such Supermicro and Tyan. This cheaper SKU runs at “only” 2.92 GHz, but will easily turbo to 3.5 GHz.

【这块10个在第三方的超威和Tyan系统中也能使用。这块便宜的CPU“仅仅”运行在2.92Ghz,很容易就能加速到3.5Ghz】

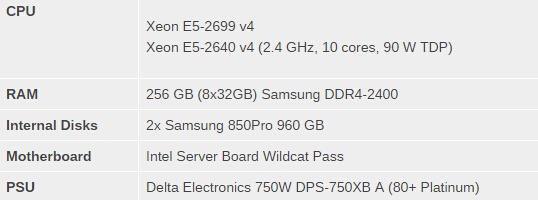

The Xeon and IBM POWER8 have totally different memory subsystems. The IBM POWER8 connects to 4 “Centaur” buffer cache chips, which have each a 19.2 GB/s read and 9.6 GB/s write link to the processor, or 28.8 GB/s in total. This is a more efficient connection than the Xeon which has a simpler half-duplex connection to the RAM: it can either write with 76.8 GB/s to the 4 channels or read from the 4 channels. Considering that reads happen twice as much as writes, the IBM architecture is – in theory – better balanced and has more aggregated bandwidth.

【至强和POWER8有着完全不同的内存子系统。POWER8连有4块“Centaur“缓存芯片,每片写入19.2GB/S,写入9.6GB/S,一共28.8GB/S。比起至强有效率的多:至强的四通道内存读/写76.8GB/S。因为读取比写入的次数要高两倍,所以IBM的架构在理论上更加均衡。】

Benchmark Selection【测试选择】

Our testing was conducted on Ubuntu Server 15.10 (kernel 4.2.0) with gcc compiler version 5.2.1.

【测试使用Ubuntu Server 15.10(内核4.2.0),gcc编译器5.2.1】

The choice of comparing the IBM POWER8 2.92 10-core with the Xeon E5-2699 v4 22-core might seem weird, as the latter is three-times as expensive as the former. However, for this review, where we evaluate single thread/core performance, pricing does not matter. As this is one of the lowest clocked POWER8 CPUs, an Intel Xeon with a high base clock – something that’s common for Intel’s chips with fewer cores – would make it harder to compare the two microarchitectures. We also wanted an Intel chip that could reach high turbo clockspeeds thanks to a high TDP.

【选择的对手是E5 2699V4 22核,看起来不是很对等,它有着三倍于这块POWER8的价钱。但测试单线程/核心性能时,价格并不重要。因为这块是最便宜频率最低的POWER8 CPU,而大多数核心更少的至强基础频率会更高,对比架构就比较困难了。我们想要块基础频率低睿频高的CPU。】

And last but not least we did not have very many Xeon E5 v4 SKUs in the lab…

【最后也是最重要的是我们的实验室里没多少E5V4。。。】

Configuration【规格】

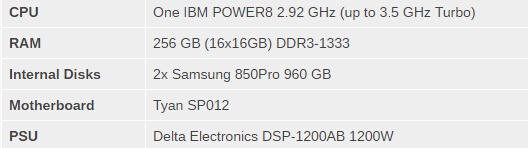

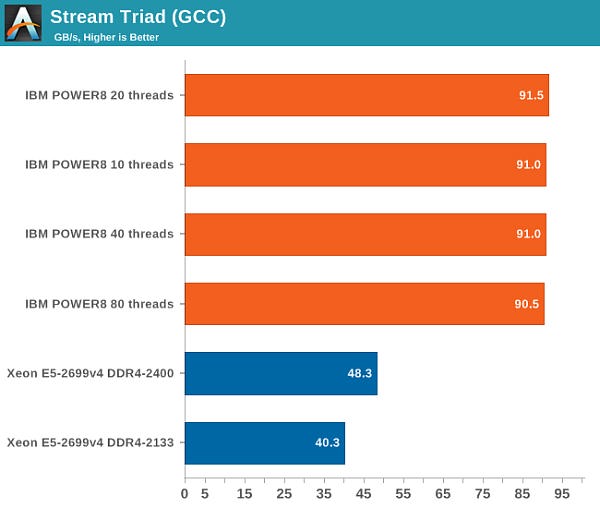

IBM S812LC (2U)

The IBM S812LC is based up on Tyan’s “Habanero” platform. The board inside the IBM server is thus designed by Tyan.

【S812LC基于Tyan的Habanero平台。】

Intel’s Xeon E5 Server ? S2600WT (2U Chassis)

Hyperthreading, Turbo, C1 and C6 were enabled in the BIOS.

【HT,睿频,C1,C6都开启。】

Memory Subsystem: Bandwidth【内存子系统带宽】

As we mentioned before, the IBM POWER8 has a memory subsystem which is more similar to the Xeon E7’s than the E5’s. The IBM POWER8 connects to 4 “Centaur” buffer cache chips, which have both a 19.2 GB/s read and 9.6 GB/s write link to the processor, or 28.8 GB/s in total. So the 105 GB/s aggregate bandwidth of the POWER8 is not comparable to Intel’s peak bandwidth. Intel’s peak bandwidth is the result of 4 channels of DDR4-2400 that can either write or read at 76.8 GB/s (2.4 GHz x 8 bytes per channel x 4 channels).

【跟之前提到的一样,POWER8有着类似于至强E7的内存子系统。POWER8连有4块“半人马“内存缓存芯片,每片写入19.2GB/S,写入9.6GB/S,一共28.8GB/S。所以POWER8总带宽105GB/S。Intel搭配4通道DDR4 2400峰值带宽为76.8GB/S,读写均可。】

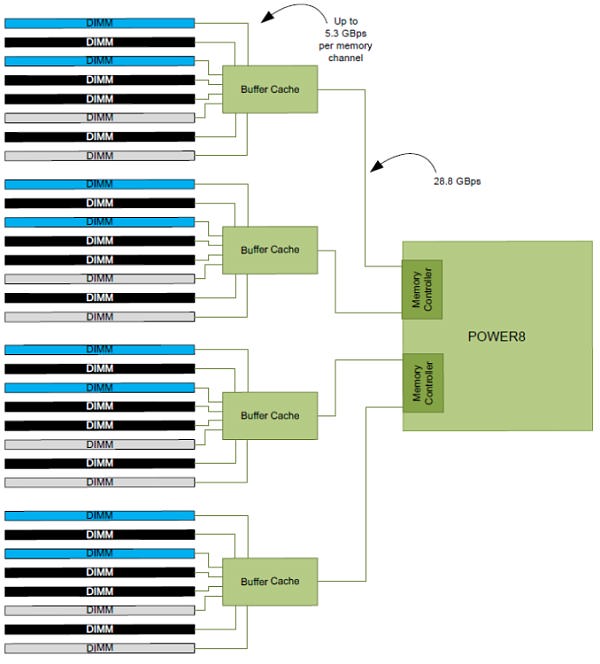

Bandwidth is of course measured with John McCalpin’s Stream bandwidth benchmark. We compiled the stream 5.10 source code with gcc 5.2.1 64 bit. The following compiler switches were used on gcc:

【带宽使用 John McCalpin’s Stream bandwidth benchmark测试。我们用gcc 5.2.1 64位编译了stream 5.10的源码。】

-Ofast -fopenmp -static -DSTREAM_ARRAY_SIZE=120000000

The latter option makes sure that stream tests with array sizes which are not cacheable by the Xeons’ huge L3 caches.

【最后的一个选项保证了stream测试不会被至强的超大L3缓存到。】

It is important to note why we use the GCC compiler and not vendors’ specialized compilers: the GCC compiler is not as good at vectorizing the code. Intel’s ICC compiler does that very well, and as result shows the bandwidth available to highly optimized HPC code, which is great for that code in the real world, but it’s not realistic for multi-threaded server applications.

【使用GCC编译器而不是专用编译器的原因:GCC编译器不擅长向量化代码。Intel的ICC编译器做得很好,而且结果显示的是高度定制的HPC代码可用带宽,而不是实际服务器应用的可用带宽。】

With ICC, Intel can use the very wide 256-bit load units to their full potential and we measured up to 65 GB/s per socket. But you also have to consider that ICC is not free, and GCC is much easier to integrate and automate into the daily operations of any developer. No licensing headaches, no time consuming registrations.

【ICC中Intel用到了256bit load单元来发挥全部潜力,达到了65GB/S带宽。但你要知道,用ICC要掏钱的,而GCC在日常开发者应用中更容易集成和自动化,不需要担心授权和注册到期。】

The combination of the powerful four load and two store subsystem of the POWER8 and the read/write interconnect between the CPU and the Centaur chips makes it much easier to offer more bandwidth. The IBM POWER8 delivers a solid 90 GB/s despite using old DDR3-1333 memory technology.

【POWER8采用的4个load2个store的系统,以及半人马芯片,使得POWER更容易带来高带宽。就算用DDR3-1333也能达到90GB带宽。】

Intel claims higher bandwidth numbers, but those numbers can only be delivered in vectorized software

【Intel官方的数字更高,但那种数字只能在向量化软件中得到。】

Memory Subsystem: Latency Measurements【内存子系统延迟】

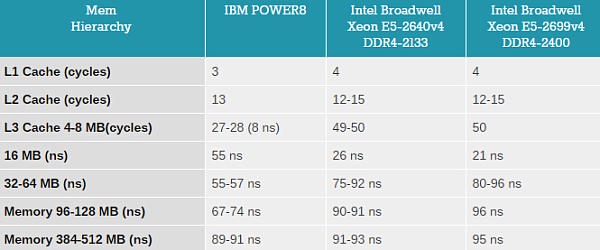

There is no doubt about it: the performance of modern CPUs depends heavily on the cache subsystem. And some applications depend heavily on the DRAM subsystem too. We used LMBench in an effort to try to measure latency. Our favorite tool to do this, Tinymembench, does not support the POWER architecture yet. That is a pity, because it is a lot more accurate and modern (as it can test with two outstanding requests).

【毫无疑问:现代CPU的性能非常依靠缓存子系统,一些应用也非常依靠内存子系统。我们用LMBench来测量延迟。】

The numbers we looked at were “Random load latency stride=16 Bytes” (LMBench).

【结果是LMBench的“Random load latency stride=16 Bytes”项】

(Note that the numbers for Intel are higher than what we reported in our Cavium ThunderX review. The reason is that we are now using the numbers of LMBench and not those of Tinymembench.)

【注意至强的结果比我们之前的要高,因为我们换成了LMBench而不是之前的Tinymenmbench。】

A 64 KB L1 cache with 4 read ports that can run at 4+ GHz speeds and still maintain a 3 cycle load latency is nothing less than the pinnacle of engineering. The L2 cache excels too, being twice as large (512 KB) and still offering the same latency as Intel’s L2.

【4读取端口、4G+频率的64KB L1,却仍能保持3周期的load延迟,这绝对是顶尖设计。L2缓存也很棒,大小是Intel的两倍,延迟却能保持一致。】

Once we get to the eDRAM L3 cache, our readings get a lot more confusing. The L3 cache is blistering fast as long as you only access the part that is closest to the core (8 MB). Go beyond that limit (16 MB), and you get a latency that is no less than 7 times worse. It looks like we actually hitting the Centaur chips, because the latency stays the same at 32 and 64 MB.

【到了eDRAM L3缓存的时候,分数让我们有点困惑。如果你只读取离核心最近的L3(8MB),它的速度就要上天。但如果超过这个值,读取16MB(16MB),延迟就比之前差了7倍以上。看起来我们实际上命中的不是L3而是半人马缓存,因为读取32到64MB时结果也是相同的。】

Intel has a much more predictable latency chart. Xeon’s L3 cache needs about 50 cycles, and once you get into DRAM, you get a 90-96 ns latency. The “transistion phase” from 26 ns L3 to 90 ns DRAM is much smaller.

【Intel的更容易估计,至强的L3需要大概50个周期,如果要读取内存,延迟就变成90-96ns。从26ns的L3到90ns的内存过渡较平缓。】

Comparatively, that “transition phase” seems relatively large on the IBM POWER8. We have to go beyond 128 MB before we get the full DRAM latency. And even then the Centaur chip seems to handle things well: the octal DDR-3 1333 MHz DRAM system delivers the same or even slightly better latency as the DDR4-2400 memory on the Xeon.

【相对的,POWER8的跨度看起来有点大,要得到完全的内存延迟需要读取128MB以上。而且半人马芯片性能很好:8通道DDR3 1333性能和DDR4 2400相同甚至稍好。】

In summary, IBM’s POWER8 has a twice as fast 8 MB L3, while Intel’s L3 is vastly better in the 9-32 MB zone. But once you go beyond 32 MB, the IBM memory subsystem delivers better latency. At a significant power cost we must add, because those 4 memory buffers need about 64 Watts.

【总结,POWER8有着两倍快的8MB L3,而Intel的L3在9-32MB则快很多。一旦超过32MB,IBM的内存子系统性能就更好。当然功耗也增加不少,因为4块半人马芯片功耗大概64W。】

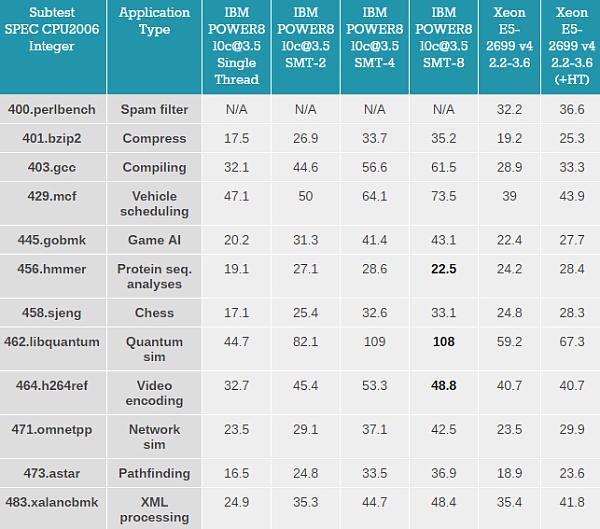

Single-Threaded Integer Performance: SPEC CPU2006【单线程整数性能:SPEC2006】

Even though SPEC CPU2006 is more HPC and workstation oriented, it contains a good variety of integer workloads. Running SPEC CPU2006 is a good way to evaluate single threaded (or core) performance. The main problem is that the results submitted are “overengineered” and it is very hard to make any fair comparisons.

【虽然SPEC2006主打HPC和工作站,但它依然有各种整数负载。跑SPEC2006是评估单核性能的好方式。主要问题是结果都被提前处理过,导致很难公平比较】

For that reason, we wanted to keep the settings as “real world” as possible. So we used:

【为此,我们想把结果尽量做得真实,所以我们采用了:】

- 64 bit gcc 5.2.1: most used compiler on Linux, good all round compiler that does not try to “break” benchmarks (libquantum…)【64bit GCC 5.2.1:使用最多的Linux编译器,很好的通用编译器并且不会“破坏”测试

- -Ofast: compiler optimization that many developers may use【-Ofast:大多数人都会用的编译器优化】

- -fno-strict-aliasing: necessary to compile some of the subtests【-fno-strict-aliasing: 对于编译一些子测试很必要】

- base run: every subtest is compiled in the same way.【base run:每个测试编译方式都相同】

The ultimate objective is to measure performance in applications where for some reason ? as is frequently the case ? a “multi-thread unfriendly” task keeps us waiting.

【终极目标就是测试对多线程不友好的负载下的性能。】

Here is the raw data. Perlbench failed to compile on Ubuntu 15.10, so we skipped it. Still we are proud to present you the very first SPEC CPU2006 benchmarks on Little Endian POWER8.

【下面是原始数据。Prelbench在Ubuntu15.10下编译失败,所以我们跳过了。但我们依然对于呈现给你首个POWER8小端下的SPEC 2006测试分数感到骄傲。】

On the IBM server, numactl was used to physically bind the 2, 4, or 8 copies of SPEC CPU to the first 2, 4, or 8 threads of the first core. On the Intel server, the 2 copy benchmark was bound to the first core.

【在IBM服务器上,使用了numactl,来让第一个核心的2,4,8条线程与SPEC CPU的2,4,8份副本绑定。在Intel服务器上,第一个核心绑定了2个副本。】

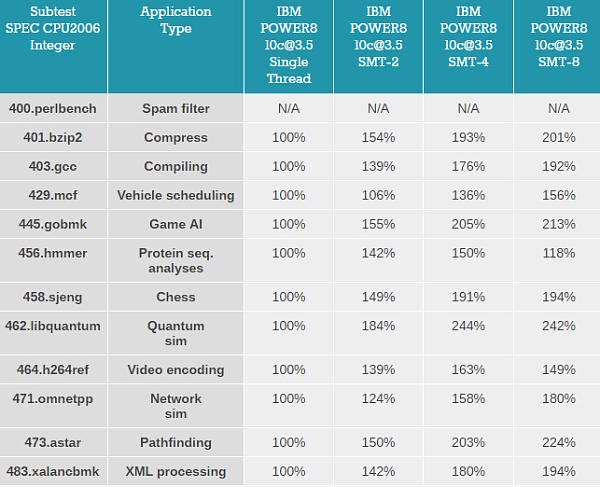

First we look at how well SMT-2, SMT-4 and SMT-8 work on the IBM POWER8.

【首先看看POWER8的SMT2、SMT4、SMT8的成绩】

The performance gains from single threaded operation to two threads are very impressive, as expected. While Intel’s SMT-2 offers in most subtests between 10 and 25% better performance, the dual threaded mode of the POWER8 boosts performance by 40 to 50% in most applications, or more than twice as much relative to the Xeons. Not one benchmark regresses when we throw 4 threads upon the IBM POWER8 core. The benchmarks with high IPC such as hmmer peak at SMT-4, but most subtests gain a few % when running 8 threads.

【和预计的一样,SMT2的性能提升非常大。Intel的SMT2只带来10-25%的性能提升,而POWER8的能带来40-50%的提升,比至强高了两倍还多。开到SMT4,没有一项测试有倒退。在hmmer这样的高IPC测试中,性能在SMT4模式下达到顶峰。大多数测试在开启SMT8后只有少许提升】

Multi-Threaded Integer Performance on one core: SPEC CPU2006【单核多线程测试】

Broadly speaking, the value of SPEC CPU2006’s int rate test is questionable, as it puts too much emphasis on bandwidth and way too little emphasis on data synchronization. However, it does give some indication of the total “raw” integer compute power available.

【通常来说,SPEC2006整数测试的价值是要打个问号的,因为它过于注重带宽,却很少注重数据一致性。然而它确实给了我们原始的测试数据。】

We will make an attempt to understand the differences between IBM and Intel, but to be really accurate we would need to profile the software and runs dozens of tests while looking at the performance counters. That would have set back this article a bit too much. So we can only make an educated guess based upon what the existing academic literature says and our experiences with both architectures.

【准确估计至强和POWER直接的不同是很困难的,我们只能根据现存的学术文章、经验来进行有依据的判断】

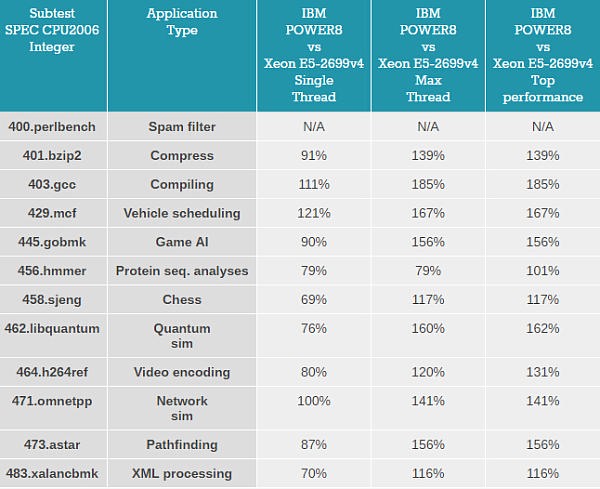

The Intel CPU performance is the 100% baseline in each column.

【下面的表格以至强的性能为100%】

On (geometric) average, a single thread running on the IBM POWER8 core runs about 13% slower than on an Intel Broadwell architecture core. So our suspicion that Intel is still a bit better at extracting parallelism when running a single thread is confirmed.

【POWER8单线程下比Broadwell要慢大概13%。我们估计Intel在单线程提取并行时还是要略好的。】

Intel gains the upper-hand in the applications where branch prediction plays an important role: chess (sjeng), pathfinding (astar), protein seq. analysis (hmmer), and AI (gobmk). Intel’s branch misprediction penalty is lower if the other branch is available in the ?op cache (the Decode Stream Buffer) and Intel has a few clever tricks that the IBM core does not have like the loop stream detector.

【Intel在多分支预测的测试中占得上风:chess (sjeng), pathfinding (astar), protein seq. analysis (hmmer) 和 AI (gobmk)。Intel的分支误预测开支要低一些,而且Intel还有一些IBM没有的设计,比如循环流检测器。】

Where the POWER8 core shines is in the benchmarks where memory latency is important and where the load units are a bottleneck, like vehicle scheduling (mcf). This is also true, but in lesser degree, for the network simulation (omnetpp). The reason might be that omnetpp puts a lot of pressure on the OoO buffers, and Intel’s architecture offers more room with its unified buffers, whereas IBM POWER8’s buffers are more partitioned (see for example the issue queue). Meanwhile XML processing does a lot of pointer chasing, but quick profiling has shown that this benchmark mostly hits the L2, and somewhat the L3. So there’s no disadvantage for Intel there. On the flip side, Xalancbmk is the benchmark with the highest pressure on the ROB. Again, the larger OOO buffers for one thread might help Intel to do better.

【POWER8的强项在于对内存延迟敏感,或者load单元成为瓶颈的测试,例如vehicle scheduling (mcf)。在 network simulation (omnetpp)也差不多,但提升更小。原因可能是omnetpp对乱序缓存压力很大,Intel架构的统一缓存带来了更多空间,而POWER8的缓存被分割了。同时XML处理相对Intel还有很大差距,因为这个测试主要使用L2以及部分L3,这是Intel的强项。另一方面, XML处理对ROB施加的压力最多。再一次,Intel的大容量乱序缓存占了优势。】

POWER8 also does well in GCC, which has a high percentage of branches in the instruction mix, but very few branch mispredictions. GCC compiling is latency sensitive, so a 3 cycle L1, a 13 cycle L2, and the fast 8MB L3 help.

【POWER8在GCC中做的也不错,在指令中有高比例的分支,但误预测概率低。GCC编译对延迟敏感,所以POWER8 3周期的L1,13周期的L2以及高速L3占优。】

Finally, the pathfinding (astar) benchmark does some intensive pointer chasing, but it misses the L1- and L2-cache much less often than xalancbmk, and has the highest amount of branch misprediction. So the impact of the pointer chasing and memory latency is thus minimal.

【pathfinding (astar)中pointer chasing占一部分,但它L1和L2的未命中率比XML低很多,还有着最高的分支误预测率。因此pointer chasing和内存延迟的影响很小。】

Once all threads are active, the IBM POWER8 core is able to outperform the Intel CPU by 41% (geomean average).

【一旦线程全开,POWER8比至强要快平均41%。】

Closing Thoughts【总结】

Testing both the IBM POWER8 and the Intel Xeon V4 with an unbiased compiler gave us answers to many of the questions we had. The bandwidth advantage of POWER8’s subsystem has been quantified: IBM’s most affordeable core can offer twice as much bandwidth than Intel’s, at least if your application is not (perfectly) vectorized.

【这次测试解决了我们的很多疑问。POWER8的带宽优势确定:IBM最便宜的POWER8可以提供至强的两倍带宽(如果你的应用没有完美向量化)】

Despite the fact that POWER8 can sustain 8 instructions per clock versus 4 to 5 for modern Intel microarchitectures, chips based on Intel’s Broadwell architecture deliver the highest instructions per clock cycle rate in most single threaded situations. The larger OoO buffers (available to a single thread!) and somewhat lower branch misprediction penalty seem to the be most likely causes.

【虽然POWER8每周期可以分派8条指令,Intel只有4-5条,但Broadwell在单线程上依然提供了最高的IPC。BDW更大的乱序缓存(单一线程可用)、更低的分支预测开支是最可能的原因。】

However, the difference is not large: the POWER8 CPU inside the S812LC delivers about 87% of the Xeon’s single threaded performance at the same clock. That the POWER8 would excel in memory intensive workloads is not a suprise. However, the fact that the large L2 and eDRAM-based L3 caches offer very low latency (at up to 8 MB) was a surprise to us. That the POWER8 won when using GCC to compile was the logical result but not something we expected.

【然而差距并不大很大:S812LC的POWER8在同频率下,提供了Broadwell的87%的单核性能。POWER8在对内存负载高的程序中性能更好。然而POWER8的大L2和低延迟L3给了我们惊喜。POWER8使用GCC编译器取胜是合理的结果,但这是我们没料到的。】

The POWER8 microarchitecture is clearly built to run at least two threads. On average, two threads gives a massive 43% performance boost, with further peaks of up to 84%. This is in sharp contrast with Intel’s SMT, which delivers a 18% performance boost with peaks of up to 32%. Taken further, SMT-4 on the POWER8 chip outright doubles its performance compared to single threaded situations in many of the SPEC CPU subtests.

【显然POWER8架构是设计为至少运行两个线程的。SMT2带来了平均43%的性能提升,最多能达到84%。这和Intel的SMT2形成了鲜明对比,Intel的只能带来平均18%,最多32%的提升。进一步的说,POWER8的SMT4模式能带来单线程两倍的性能。】

All in all, the maximum throughput of one POWER8 core is about 43% faster than a similar Broadwell-based Xeon E5 v4. Considering that using more cores hardly ever results in perfect scaling, a POWER8 CPU should be able to keep up with a Xeon with 40 to 60% more cores.

【总的来说,POWER8的最大吞吐量比相似的E5 V4要高43%。由于更多的核心并不一定能带来更多性能,一个POWER8 CPU的性能应该能和比自己多出40-60%核心的至强持平。】

To be fair, we have noticed that the Xeon E5 v4 (Broadwell) consumes less power than its formal TDP specification, in notable contrast to its v3 (Haswell) predecessor. So it must be said that the power consumption of the 10 core POWER8 CPU used here is much higher. On paper this is 190W + 64W Centaur chips, versus 145W for the Intel CPU. Put in practice, we measured 221W at idle on our S812LC, while a similarly equipped Xeon system idled at around 90-100W. So POWER8 should be considered in situations where performance is a higher priority than power consumption, such as databases and (big) data mining. It is not suited for applications that run close to idle much of the time and experience only brief peaks of activity. In those markets, Intel has a large performance-per-watt advantage. But there are definitely opportunities for a more power hungry chip if it can deliver significantly greater performance.

【我们发现E5 V4的功耗比正式TDP要低,这和前代Haswell不同。必须要提到的是10核POWER8的功耗要高得多。纸面上它的功耗是190W+64W的半人马芯片,而至强只有145W。实际上我们测得的S812LC待机功耗是221W,相近的至强则为90-100W。因此POWER8更适合性能优先的场合,比如数据中心或者大数据挖掘。它并不适合长期接近待机,只有少数时候才满载的应用。在这些市场,Intel有着能耗比优势。但很明显,高性能高耗电的芯片也是有机会的。】

L3缓存测试Power和Xeon间的差距主要原因是L3缓存的设计不同。Power8是8MB/core,每个core的缓存独立设计(可以通过inter-core communication来读取其他核的缓存,但不是默认共享)。所以8-16MB缓存测试时Power处理器实际上是读取片外缓存(L4)。Xeon采用的是共享L3,8-16MB测试时仍然访问L3cache。但需要指出的是,这对于单核测试而言对Power不公平,因为多核同时工作时Xeon每个核分到的L3cache要远远少于Power8。而且共享设计会造成核间的cache flush也就是运行在不同核上的任务间会互相抢占和影响cache,这也是为什么新的Xeon中引入了cache partition(CAT)技术来保证cache访问的isolation。

OoO好萌

马文,这个IBM POWER8评测还有个part 2,能不能也翻出来,还有你有没有POWER9的消息?

@yue96:Part2隔了两个月早忘了

等有时间了再…

我这还好几篇堆着没翻