VEGA is better than you think

【本文只是摘取的评论,不代表本站观点。 说到底只是猜测罢了。】

VEGA 10 (GCN 5.0) Architecture is at present being judged by the Frontier Edition (Workstation / PRO) Drivers, and while it does have (Consumer / RX) Drivers included with the ability to switch between the two… currently neither of the VEGA 10 Drivers actually support the VEGA 10 Features beyond HBCC.

Yes, the Workstation Drivers do support FP16 / FP32 / FP64, as opposed to the Consumer Drivers that support only FP32 (Native) and FP16 via Atomics.

Atomics allows a Feature to be used that is Supported but you’re still Restricted by Driver Implementation as opposed to Direct GPU Optimisation.

FP16 Atomics does not provide the same leverage for Optimisation as a Native FP16 Pipeline.

Essentially we’re talking the difference Vs. FP32 Pipeline of +20% Vs. +60% Performance.

Now it should still be noted that, we’re not seeing a +100% Performance; because…

The Asynchronous Compute Engines (ACE) are still limited to 4 Pipelines and only support Packed Math Formats, which requires a slightly larger and more complex ACE than an FP32 version… thus you’re not strictly getting 8x FP16 or 4x FP32 as in Legitimate Threads, but instead the Packing and Unpacking of the Results is occurring via the CPU (Drivers), so you have added Latency and what can be best described as “Software Threading”

So yeah you’re looking ~40% Performance compared to a pure Hardware Solution, still this is within the same region of performance improvement that NVIDIA achieve through Giga-Threading. Which is almost literally Hyper-Threading for CUDA.

And such will see marginal benefits (up to 30%) in Non-Predictive Branches (i.e. Games) and 60% in Predictive Branches (i.e. Deep Learning, Rendering, Mining, etc.)

As this is entirely Software Handled, assuming support for Packed Math within the ACE… this is why we’re seeing the RX VEGA Frontier Edition is essentially on-par with GCN 3.0 IPC if it were capable of being Overclocked to the same Clock Speeds. So, eh… this provides Decent Performance but keep in mind, essentially what we’re seeing is what VEGA is capable of on FIJI (GCN 3.0) Drivers.

In short… what is happening is the Drivers are acting as a Limiter, in essence you have a Bugatti Veyron in “Road” Mode; where it just ends up a more pleasant drive overall… but that’s a W12 under-the-hood. It can do better than the 150MPH that it’s currently limiting you to.

The question here ends up being, “Well just how much of a difference will Drivers make?” … Conservatively speaking, the RX VEGA Consumer Drivers are almost certainly going to provide 20 – 35% Performance Uplift over what the Frontier Edition has showcased on FIJI Drivers.

Yet most of that optimisation will come from FP16 Support, Tile-Based Rendering, Geometry Discard Pipeline, etc. while HBCC will continue to ensure that the GPU isn’t starved for Data maintaining very respectable Minimums that are almost certainly making NVIDIA start to feel quite nervous.

Still, this isn’t the “Party Trick” of the VEGA Architecture.

Something that most never really noticed was AMDs claim when they revealed Features of Vega.

Primarily that it supports 2X Thread Throughput. This might seem minor, but I’m not sure people quite grasped (NVIDIA did, because they got the GTX 1080 Ti and Titan Xp out to market ASAP following the official announcement of said features) is this actually is perhaps THE most remarkable aspect of the Architecture.

So… what does this mean?

In essence the ACE on GCN 1.0 to 4.0 has 4 Pipelines, each is 128-Bit Wide. This means it processes 64-Bit on the Rising Edge, and 64-Bit on the Falling Edge of a Clock Cycle.

Now each CU (64 Stream Processors) is actually 16 SIMD (Single Instruction, Multiple Data / Arithmetic Logic Units) each SIMD Supports a Single 128-Bit Vector (4x 32-Bit Components, i.e. [X, Y, Z, W]) and because you can process each individual Component … this is why it’s denoted as 64 “Stream” Processors, because 4×16 = 64.

As I note, the ACE has 4 Pipelines that Process, 4×128-Bit Threads Per Clock.

The Minimum Operation Time is 4 Clocks … as such 4×4 = 16x 128-Bit Asynchronous Operations Per Clock (or 64x 32-Bit Operations Per Clock)

GCN 5.0 still has the same 4 Pipelines, but each is now 256-Bit Wide. This means it processes 128-Bit on the Riding Edge, and 128-Bit on the Falling Edge.

Each CU is also now 16 SIMD that support a Single 256-Bit Vector or Double 128-Bit Vector or Quad 64-Bit Vector (4x 64-Bit, 8x 32-Bit, 16x 16-Bit).

It does remain the same SIMD merely the Functionality is expanded to support Multiple Width Registers, in a very similar approach to AMD64 SIMD on their CPU; which believe it or not, AMD SIMD (SSE) is FASTER than Intel because of their approach. This is why Intel kept introducing new Slightly Incompatible versions of SSE / AVX / etc.

They’re literally doing it to screw over AMD Hardware being better by using their Market Dominance to force a Standard that deliberately slows down AMD Performance, hence why Bulldozer Architecture appeared to be somewhat less capable in a myriad of common scenarios.

Anyway, what this means is Vega remains 100% Compatible and can be run as if it were a current Generation GCN Architecture.

So all of the Stability, Performance Improvements, etc. they should translated pretty well and it will act in essence like a 64CU Polaris / Fiji at 1600MHz; and well that’s what we see in the Frontier Edition Benchmarks.

Now a downside of this, is well it’s still strictly speaking using the “Entire” GPU to do this… so the power utilisation numbers appear curiously High for the performance it’s providing; but remember is being used as if under 100% Load; while in reality it’s Utilisation is actually 50%.

Here’s where it begins to make sense as to why when they originally began showing RX VEGA at Trade Conventions, they were using it in a Crossfire Combination; as it is a Subtle (to anyone paying attention, again like NVIDIA) hint at when fully Optimised the Ballpark of what a SINGLE RX VEGA will be capable of under a Native Driver.

And well… it’s performance is frankly staggering as it was running Battlefield 1, Battlefront, Doom and Sniper Elite 4 at UHD 5K at 95 FPS+

For those somewhat less versed in the processing Power Required here.

The Titan Xp, is capable of UHD 4K on those games at about 120 FPS, if you were to increase it to UHD 5K it would drop to 52 FPS; and at this point it’s perhaps dawning on those reading this why NVIDIA have somewhat entered “Full Alert Mode” … because Volta was aimed at ~20% Performance Improvement, and this was being achieved primarily via just making a larger GPU with more CUDA Cores.

RX VEGA has the potential to dwarf this in it’s current state.

Still this also begins to bring up the question… “If AMD have that much performance just going to waste… Why aren’t they using it to Crush NVIDIA? Give them a Taste of their own Medicine!”

Simple… they don’t need to, and it’s actually not advantageous for them to do so.

While doing this might give them the Top-Dog Spot for the next 12-18 months… NVIDIA aren’t idiots, and they’ll find a way to become competitive; either Legitimate, or via utilising their current Market Share.

And people will somewhat accept them doing this to “Be Competitive”, but if AMD aren’t being overly aggressive and letting NVIDIA remain in their Dominant Position; while offering value and slowly removing NVIDIA from the Mainstream / Entry Level… well then not only do they know that they can with each successive “Re-Brand” Lower Costs, Improve the Architecture and offer a Meaningful Performance Uplift for their Consumers while remaining Competitive with anything NVIDIA produce.

They can also (which they do appear to be doing) with Workstation GPUs appear to be offering better performance and value in those scenarios… again better than what NVIDIA can offer, and in said Arena NVIDIA don’t have the same tools (i.e. Developer Support / GameWorks / etc.) to really do anything about this beyond throwing their toys out of the pram.

As I note here, NVIDIA can’t exactly respond without essentially appearing to be petty / vindictive and potential breaking Anti-Trust (Monopoly) Laws to really strike out against AMD essentially Sandbagging them.

With perhaps the worst part for NVIDIA here being, they can see it plain as bloody day what AMD are doing; but can’t do anything about it. Knowing that regardless what they do, AMD can within a matter of weeks put together a next-generation launch (rebrand); push out new drivers that tweak performance and simply match it while undercutting the price by ?20-50.

Even at the same price, it will make NVIDIA look like it’s loosing it’s edge.

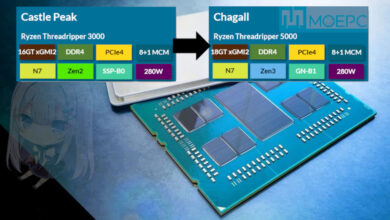

THAT is what Vega and Polaris have both been about for AMD, the same is true with Ryzen, Threadripper and Epyc.

AMD aren’t looking at a short term “Win” for a Generation… they’re clearly seeking to destroy their competitors stranglehold on the Industry as a whole.

< ? >

Oh and if you don’t believe me on how seriously NVIDIA are taking this… the Titan Xp Driver up-date that unlocked it’s Professional (Workstation) Functionality, essentially brings it inline with the P100 in terms of Performance.

The Titan Xp is $1,200, the Quadro P100 is $4,500 … they’ve essentially made with a driver update, that P100 obsolete; and basically given up on $3,000 of pure profit each Point-of-Sale of said Workstation Card gave them. You don’t do that if you’ve not had an “Oh Shit!” Moment about what the Competition is offering.

via:https://www.reddit.com/r/Amd/comments/6rm3vy/vega_is_better_than_you_think

哇,按摩店下盘大旗。马太太还翻不翻这个了?

@台湾佬:gg了还翻个p

现在可以继续推升股价的三个消息:1.vega性能再提升30%以上;2.三季度大幅盈利至每股0.15以上;3.cpu和GPU市占率增长超过10%。

一天没有实际运行数据,推测文再多也没用,再好也只是纸面上的描述。

首发测试就能分出是宝还是垃圾。

你对这个词可能有点敏感

就这个帖子而言,讲的无非就是推土机上的那一套故技重施,设计超前需要鸡血补丁

推土机发布前预期很高,谈架构优越都是后话。vega还没正式出测试就这种论调,可能不叫洗,叫自黑吧.

@SS:这只是个转载的评论罢了。

你对这个帖子可能过于认真

求大神翻译

TL;DR: VEGA双卡交火性能才代表了单卡完全优化的性能。VEGA对Volta有将近50%优势但现在农企为了以后能挤牙膏现在只能放放水。而NVIDIA目前对此毫无招架之力。

农企有那么大自信还定价499?怕不是活在梦里。

洗得好,有bulldozer的遗风

@scad:看好出处。

这只是猜测而已,这也叫”洗地”?

无话可说。

看这个藏藏掖掖的态度就知道性能好不了,看看cpu部门,对intel有竞争力之后对各种数据各种跑分的披露是多么积极

大概意思是VEGA专业卡的驱动不支持FP32操作? 而游戏恰恰非常需要FP32

VEGA 游戏卡的IPC等效 Fiji , 即 1.6G vega 性能是fury x 1.5倍以上

求正确翻译 谢谢